In a post published last week, meta question, "Where are the robots?" The answer is simple. They're here. You just have to know where to look. Leaving talk about cars and driver assistance aside and concentrating on things we all tend to agree are robots. For starters, that Amazon delivery doesn't come to you without robotic assistance.

A more pertinent question would be: Why aren't there more robots? And more specifically, why aren't there more robots in my house right now? It's a complex question with many nuances, many of which boil down to the current state of hardware limitations around the concept of a "general purpose" robot. Roomba is a robot. There are plenty of Roombas in the world, and that's largely because Roombas do one thing right (an extra decade of R&D has helped move things from a "pretty good" state).

It's not so much that the premise of the question is flawed, it's more a matter of rephrasing it slightly. "Why aren't there more robots?" is a perfectly valid question for someone who is not a roboticist.

The Meta version is software-based, and that's fair enough. In recent years, there has been an explosion of startups tackling several important categories, such as robotic learning, deployment/management, and no-code and low-code solutions. Special mention to the almost two decades of research and development that went into creating, maintaining and improving ROS (Robot Operating System, open source). Fittingly, longtime leaders Open Robotics were acquired by Alphabet, which has been doing its own work in the category through local efforts Intrinsic and Everyday Robots (although they were disproportionately affected by reduced resources across the board). the organization).

No doubt Meta/Facebook has its own share of skunkworks projects that pop up from time to time. I haven't seen anything so far to suggest they're up there with what Alphabet/Google has explored over the years, but it's always interesting to see some of these projects looming. In an announcement that I strongly suspect is related to the proliferation of generative AI debates, the social media giant has shared what it calls "two major advances toward general-purpose embedded AI agents capable of performing challenging sensorimotor skills."

Quoting directly here:

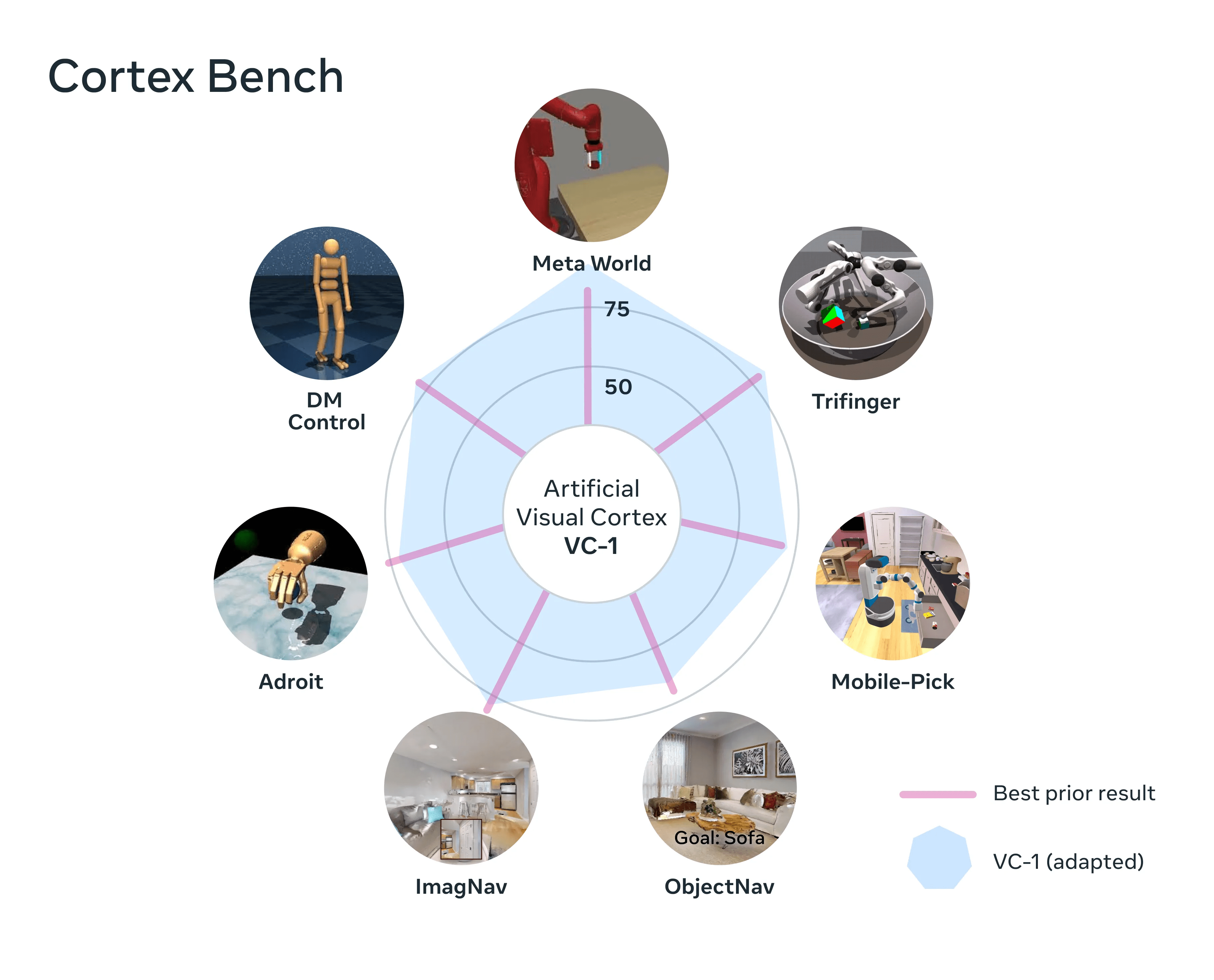

An artificial visual cortex, Cortex Bench (named VC-1): A unique perception model that, for the first time, supports a wide range of sensorimotor abilities, environments, and incarnations. VC-1 is trained on videos of people performing everyday tasks from the innovative Ego4D dataset created by Meta AI and academic partners. And VC-1 matches or exceeds the best-known results on 17 different sensorimotor tasks in virtual environments.

A new approach called adaptive skill coordination (sensomotor) (ASC), which achieves near-perfect performance (98 percent success) in the challenging task of robotic mobile manipulation (navigate to an object, pick it up, navigate to another location, place the object, repetition) in physical environments.

Image: Meta

Interesting research, to be sure, and exciting to potentially delve into some of this. The phrase "general purpose" is being thrown around a lot these days. It's a perpetually interesting topic of conversation in robotics, but there's been a massive proliferation of general-purpose humanoid robots coming out of the woodwork in the wake of the introduction of the Tesla bot. For years, people have said things like, "Say what you want about Musk, but Tesla has sparked renewed interest in electric vehicles," and that's pretty much how I feel about Optimus right now. It served an important dual function by renewing the discussion of form factor, while providing a clear picture to point to when explaining how difficult this is. Is it possible to dramatically raise public expectations and moderate them at the same time?

Again, those conversations dovetail nicely with all these GPT advances. This is all very impressive, but Rodney Brooks raised the danger of mixing things up quite well on this one a few weeks ago: “I think people are too optimistic. They are confusing performance with competence. You see a good performance in a human being, you can tell what he is competent at. We're pretty good at modeling people, but those same models don't apply. You see great performance from one of these systems, but it doesn't tell you how it's going to perform in the adjacent space or with different data."

Image: covariant

Obviously, this does not preclude asking most people in ProMat about his opinion on the future role of generative AI in robotics. The responses were wide-ranging. Some shrug, others see a very regimented role for technology, and still others are extremely optimistic about what all this means for the future. Peter Chen, the CEO of Covariant (which just raised $75 million), offered some interesting context when it comes to pervasive AI:

Before the recent ChatGPT, there were a lot of natural language processing AIs. Search, translate, sentiment detection, spam detection – there was a ton of natural language AI out there. The approach before GPT is, for each use case, to train a specific AI, using a smaller subset of data.

Looking at the results now, GPT removes the translation field, and isn't even able to translate. Basically, the base model approach is, instead of using small amounts of data that are specific to a situation or training a model that is specific to a circumstance, let's train a large generalized base model with a lot more data, so AI is more pervasive.

Of course, Covariant is currently very focused on pick and place. Frankly, it's a big enough challenge to keep them busy for a long time. But one of the promises that systems like this offer is real-world training. Companies that actually have real robots doing real jobs in the real world are building extremely powerful databases and models of how machines interact with the world around them.

It's not hard to see how many of the seemingly disparate building blocks being strengthened by researchers and companies might one day come together to create a truly general-purpose system. When the hardware and AI are at that level, there will be a seemingly endless trove of field data to train them with.

At the moment, the platform approach makes a lot of sense. With Spot, for example, Boston Dynamics is effectively selling customers an iPhone model. First, it produces the first generation of an impressive piece of hardware. It then offers an SDK to interested parties. If things go as planned, suddenly you have this product doing things your team never imagined. Assuming that doesn't involve mounting a gun to the back of the product (per BD's guidelines), that's an exciting proposition.

Image: 1X

It is too early to say anything definitive about The NEO robot from 1X Technologies, beyond the fact that the firm clearly hopes to live right in that cross section between robotics and generative AI. You certainly have a powerful ally in OpenAI. The generative AI giant's Startup Fund led a $23,5 million round, which also featured Tiger Global, among others.

Says 1X founder and CEO Bernt Øivind Børnich, “1X is delighted to have OpenAI lead this round because we are aligned on our missions: to thoughtfully integrate emerging technology into people's daily lives. With the support of our investors, we will continue to make significant advances in the field of robotics and increase the global job market."

An interesting note on that (at least to me) is that 1X has been around for a minute. The Norwegian firm was known as Halodi until its very recent (exactly one month ago) concise rebranding. You only have to go back a year or two to see the beginnings take on the humanoid form factor the company was developing. for food service. The technology definitely looks more sophisticated than its 2021 counterpart, but the wheeled base reveals just how far to go to get to some version of the robot we see if it's rendered.

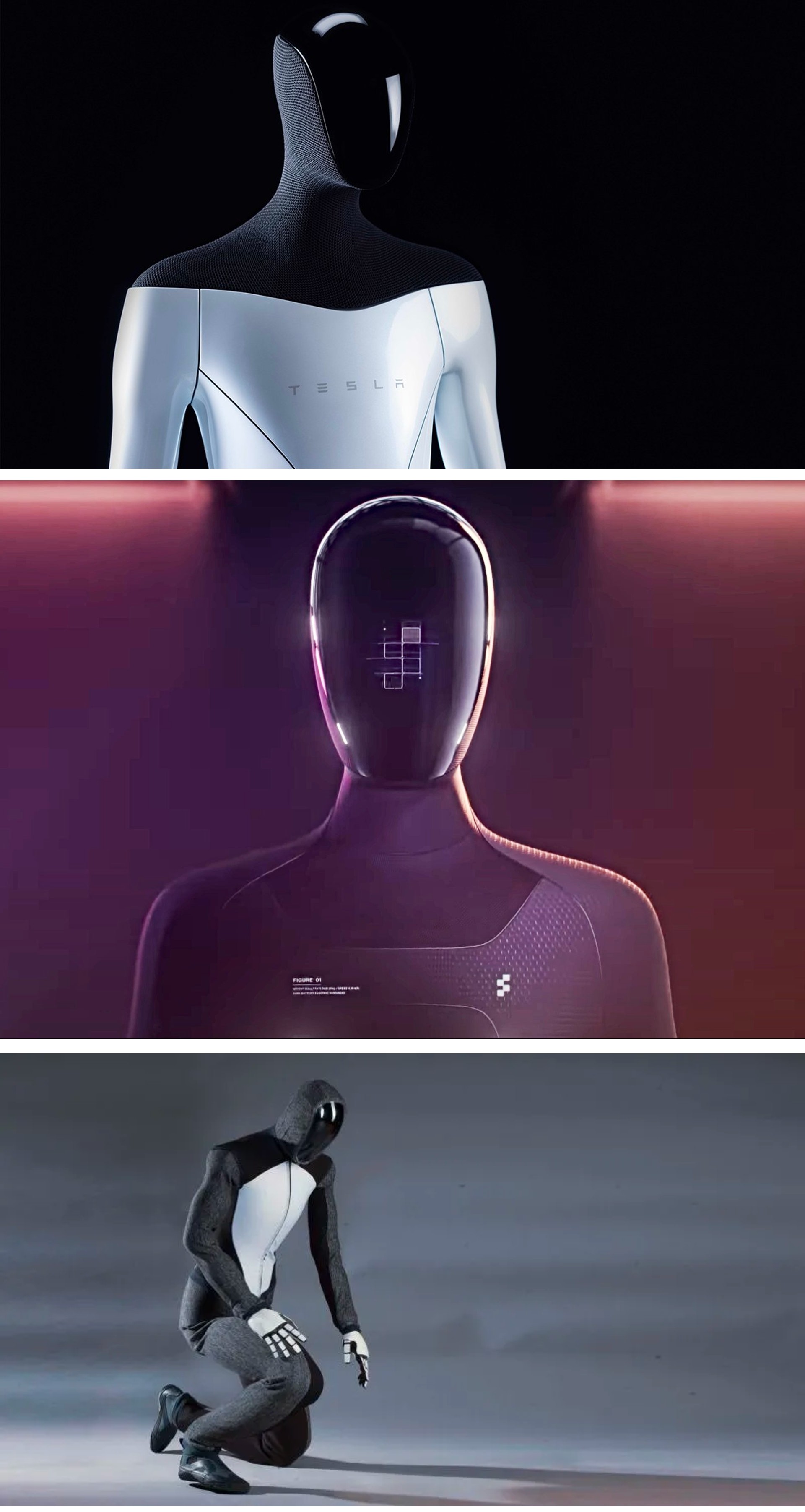

Images: Tesla/Figure/IX

From top to bottom, these are renders of the Tesla Optimus, Figure 01, and 1X Neo. They're not direct copies, obviously, but they certainly look like they could be cousins. Neo is the one who insists on wearing a hoodie, even on formal occasions.

Image: MITCSAIL

In parallel there are interesting research projects. One is from MIT. When you really think about it, playing soccer is a great way to test your locomotion. There's a reason the Robocup has been around for almost 20 years. However, in Dribblebot's case, the challenge is uneven terrain, including things like grass, mud, and sand.

Says MIT professor Pulkit Agrawal:

If you look around you today, most robots have wheels. But imagine there's a disaster scenario, a flood or an earthquake, and we want robots to help humans in the search and rescue process. We need the machines to traverse terrain that is not flat, and wheeled robots cannot traverse such landscapes. The goal of studying legged robots is to go into realms beyond the reach of current robotic systems.

Image: University of California Los Angeles

Another research project is from the UCLA Samueli School of Engineering, which has recently published findings from his work around origami robots. Origami MechanoBots, or "OrigaMechs," rely on sensors embedded in their thin polyester building blocks. Principal investigator Ankur Mehta has some pretty far-flung plans for the technology.

“These types of dangerous or unpredictable scenarios, such as during a natural or man-made disaster, could be where origami robots proved especially useful,” he said in a post. “Robots could be designed for special functions and manufactured on demand very quickly. Also, while it is far away, there could be environments on other planets where scout bots that are immune to those scenarios would be highly desirable."